[I also thought giving it the title “guns don’t kill people, people with a poor understanding of Bayes theorem do…”]

One of THE most useful thing you can learn when it comes to reading a paper is to understand the negative predictive value of a test. Both what it means and what it doesn’t mean

In general the NPV is used in a way that makes a test seem better than it really is.

A lot of tests we commonly use (like say D-dimer) have quoted NPVs of 98% or so. Which sounds great. If the test is negative in a certain bunch of patients similar to the one that the test was developed for then there’s a98% they don’t have the disease. That’s great isn’t it?

The key is in the italics. If the test was developed in a population of patients where only 5% had the disease then the NPV will be very different if applied to a population where 50% have the disease.

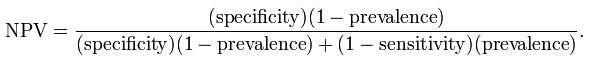

How do you get an NPV?

What do you need to know about that calculation?

Specificity and sensitivity are a factor of the test itself and should be constant whereas the prevalence varies from population to population.

To save you working it out the NPV will go down as the prevalence of the disease increases.

So if your quoted NPV of 98% was worked out in a population of only 5% prevalence then if you apply the test to a population prevalence of 50% then you can’t expect the same NPV.

[As an aside if you’re trying to rule out a disease in a population with a disease prevalence of 50% then you sure as hell better be using a VERY sensitive test if you want to lower your post-test probability sufficiently]

A much more useful test characteristic in our line of work is high sensitivity. A d-dimer probably is more in the range of low 90s when it comes to sensitivity which is why if you’re working up someone with a pre-test probability in the 50% range it’s kind of a useless test.

Now as usual I’m sharing my own ignorance here, but does anyone know of an occasion where you’d genuinely want to know the NPV of a test?

Thanks for the great post. Link was suggested by Mike Cadogan of #LITFL fame!

cheers pranab

Agree with the lack of real world applicability of the NPV.

In the real world we need to know (or at least estimate) 2 things when determining the utility of an investigation:

1. pretest probability

2. likelihood ratio for the investigation

That’s what we need to know to determine the post-test probability.

Of course, we also need to know what thresholds of probability need to be met in order to make a clinical decision (i.e. are we happy to rule out MI if it there is a 10% chance? 1%? 0.1%?..)

I highly recommended Scott Weingart’s ‘old’ (and much neglected) book on clinical decision making for an easy introduction to evidence-based emergency medicine clinical decision making: http://scottweingart.com/emergency-medicine-decision-making.htm

Cheers,

Chris

Hi Chris, sorry for the late reply, your comment had somehow ended up in the spam box!

Far and away the most important discussion for numbers we need to have is about acceptable miss rates and I’ve heard some good stuff on that from Dave Schriger and Jerry Hoffman.

I heard scott talk about the book the other day on one of the podcasts and added it to my wish list!

I find likelihood ratios very useful. I confess I’ve never really quite understood odds or hazard ratios though i’m not quite sure they’re of that much to use to us

(From an expat over in Australia, and a TCD graduate)

The bullshit-detector that gets to the nitty gritty under all kinds of dressed up stats is the number-needed-to-treat. (not to mention the oft-forgotten number -needed-to-harm!).

David Newman’s (who you mention elsewhere) site thennt.com is one of the best resources out there to sift through of-misunderstood evidence. Keep up the blog mate, it’s a good angle! Domhnall

cheers for the encouragement

absolute differences (and NNTs of course) are great for treatment

despite that I still give heparin to the ACS patients because of the protocols in our hospitals and the cardiology guys like it! Not that’s great evidence but it gets me through a day without a fight!

andy